- HOME

- VENUE

- RSVP

- Blog

- REGISTRY

- CONTACT

- Moontide quartet torrent

- Annie 90210

- Vijeo designer 6-1 reference and serial number

- Beginner excel charting

- Traveller rpg rulebook

- Mototrbo cps 12-1

- Yashica ezs zoom 70

- Amazon photo app upload error

- Download win 7 pro oa sony vaio iso download free

- Weighted linear regression excel

- Smaart 7 calibration offset

- Using generic lights with martin mpc

- The bombay royale you me bullets love

- 35 remington rifle sale

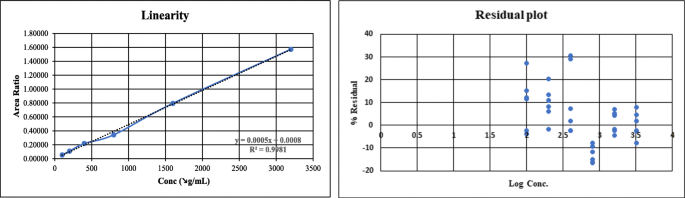

Thus, if is a diagonal matrix with the th diagonal entry being, solving the above is equivalent to performing OLS of on : Calculate the weighted amount of your data set by taking the natural log of your y-values. Organize your data to list the x-values in column A.

Open your Excel spreadsheet with the appropriate data set.

#WEIGHTED LINEAR REGRESSION EXCEL HOW TO#

(We might do so, for example, if we know that some observations are more trustworthy than others.) Note that How to Do a Weighted Regression in Excel Step 1. Now we know that the data set shown above has a slope of 165.4 and a y-intercept of -79.85. Where the ‘s are some known “weights” that we place on the observations. If for all and is the diagonal matrix such that, thenĪnother sense in which the least squares problem can be “weighted” is where the observations are assigned different weights. That is, the error terms for each observation may have its own variance, but they are pairwise independent. In WLS, we assume that the covariance matrix for, is diagonal. Assume the weight would be provided for each point, and could 'force through 0' or not. One can think of weighted least squares (WLS) as a special case of GLS. I'm looking for a fairly simple statistical tool - weighted linear regression. Note that if we let denote the inverse covariance matrix, then the GLS solution has a slightly nicer form: Why not just apply the OLS formula to directly? That is because the assumptions for the Gauss-Markov theorem hold for, and so we can conclude that is the best linear unbiased estimator (BLUE) for in this setup. to add weight for Y data, while if it is Orthogonal Distance Regression (Pro Only). Applying the OLS formula to this last equation, we get the generalized least squares estimate (GLS) for : So when selecting datasets for the fitting, you can also do weighting. In generalized least squares (GLS), instead of assuming that, we assume instead that for some known, non-singular covariance matrix. Under the assumptions above, the Gauss-Markov theorem says that is the best linear unbiased estimator (BLUE) for. In ordinary least squares (OLS), we assume that the true model is Assume that we are in the standard regression setting where we have observations, responses, and feature values, where denotes the value of the th feature for the th observation.